Welcome!

Introduction

Thank you for your interest in EOCV-Sim! (EasyOpenCV Simulator)

This tool was built to help FTC teams learn and develop their seasonal OpenCV algorithms in a straightforward way, with some extra utilities to make that process a little smoother.

The main purpose of this software is to simulate the package and class structure of OpenFTC’s EasyOpenCV and the parts of the FTC SDK relevant to vision, while also providing OpenCV functionality and a simple GUI.

Because the simulated structure mirrors EasyOpenCV’s API, your imports and class names stay the same as they would be on an actual robot. When you’re ready to deploy, you can copy your vision code directly into your Android Studio project without any changes.

While the main focus of this software is the FIRST Tech Challenge competition, it works just as well for general-purpose computer vision projects. We encourage anyone to use it as a learning tool, inside or outside of FTC.

We’re always open to suggestions if you see something that could be added or improved! GitHub Issues are open for suggestions and bug reports.

Buy me a coffee

For the past 4 years I’ve been developing and maintaining learning tools for robotics students to get a better grasp of programming and computer vision. Now that I’ve graduated from the robotics competition and I’m heading to college, my goal is to keep these tools maintained and improving for future generations — completely free and open source. If you’d like to support that, a donation on Buy Me a Coffee goes a long way as life gets busier.

- Sebastian Erives, deltacv’s main dev

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

Downloading EOCV-Sim

Prerequisites: Java

EOCV-Sim requires Java 17 or newer to run. If you don’t have it installed, grab it from one of these sources:

- Oracle JDK — official, free for personal use

- Adoptium (Eclipse Temurin) — recommended open-source alternative

Once installed, verify your Java version by opening a terminal and running:

java -version

It should print 17 or higher. If it doesn’t, make sure your JAVA_HOME environment variable points to the correct installation.

Download Latest Release

EOCV-Sim

Download the latest release and get started in seconds.

Download LatestFetching latest version…

Running EOCV-Sim

Once downloaded, double-click the jar file to launch it, just like any other executable.

You can also run it from the command line. Navigate to the folder containing the jar file using cd, then run:

java -jar "EOCV-Sim-X.X.X-all.jar"

Replace X.X.X with the actual version number, e.g. 3.1.0.

PaperVision is already included!

PaperVision ships bundled inside EOCV-Sim — no separate download needed. Head to the PaperVision docs to start building visual pipelines right away.

This article was last modified...

On 2026-05-09 02:30:13 -06:00

By Sebastian Erives

See it here:5dd0de94a8df5775d2271eb3cb8575b85b332338

OpenCV and EasyOpenCV

What is OpenCV?

OpenCV is known as a library containing multiple programming functions that are aimed at real-time computer vision.

The library has more than 2500 optimized algorithms, which includes a comprehensive set of both classic and state-of-the-art computer vision and machine learning algorithms.

These algorithms can be used to detect and recognize faces, identify objects, classify human actions in videos, track camera movements, track moving objects, extract 3D models of objects, produce 3D point clouds from stereo cameras, stitch images together to produce a high resolution image of an entire scene, find similar images from an image database, remove red eyes from images taken using flash, follow eye movements, recognize scenery and establish markers to overlay it with augmented reality, and more.

So… How do I integrate it to FTC?

The folks from OpenFTC built a library to handle exactly this, wrapping the underlying complexities behind a clean API.

EasyOpenCV integrates OpenCV into the FTC SDK in a straightforward way, bundling the full OpenCV Java library alongside interfaces for accessing internal phone cameras or external webcams and feeding their images into your pipeline.

The EasyOpenCV repo includes examples for both internal camera and webcam usage.

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

EasyOpenCV Pipelines

What is a pipeline?

A pipeline is essentially an encapsulation of OpenCV image processing for a specific purpose. Most image processing requires operations to run in sequence rather than in parallel — the output of step A feeds into step B, which feeds into step C, and so on. That’s where the term “pipeline” comes from. (EasyOpenCV docs)

EasyOpenCV implements this through an abstract OpenCvPipeline class that you extend when writing your own pipeline. Here’s a minimal pipeline that passes the input image through unchanged:

import org.opencv.core.Mat;

import org.openftc.easyopencv.OpenCvPipeline;

public class EmptyPipeline extends OpenCvPipeline {

@Override

public Mat processFrame(Mat input) {

return input;

}

}

The processFrame method from OpenCvPipeline must always be overridden — it’s where all your vision processing happens. It gets called every time a new frame arrives from the camera (or from a static image or video file, in the case of EOCV-Sim).

An OpenCV Mat (short for matrix) is the basic data structure used throughout OpenCV. For our purposes it holds image data, and it’s the building block for all image processing operations.

Whatever Mat you return from processFrame is what gets displayed in the live viewport. In the example above, we’re returning the input directly, so the image is shown as-is.

The simplest processing you can do in OpenCV is converting an image’s color space. The following pipeline converts the input to grayscale:

public class GrayPipeline extends OpenCvPipeline {

@Override

public Mat processFrame(Mat input) {

Imgproc.cvtColor(input, input, Imgproc.COLOR_RGBA2GRAY);

return input;

}

}

Note the conversion flag used: Imgproc.COLOR_RGBA2GRAY. This tells OpenCV to convert the input from the RGBA color space to grayscale.

EasyOpenCV always passes RGBA frames into the pipeline (red, green, blue, and alpha channels). This means any color space conversion needs to start from RGBA — for example, Imgproc.COLOR_RGBA2RGB, Imgproc.COLOR_RGB2HSV, Imgproc.COLOR_RGB2YCrCb, etc.

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

Creating and Running a Pipeline

Lifecycle

OpenCvPipelines are the main executable unit in EOCV-Sim, and they can be created as explained here. The lifecycle is automatically managed by the sim, which calls:

initbefore the firstprocessFrameprocessFrameevery time a new frame is dispatched from an Input SourceonViewportTappedwhen the image displayed on the UI is clicked with the mouse (or tapped if running the pipeline on a phone)

import org.opencv.core.Mat;

import org.openftc.easyopencv.OpenCvPipeline;

public class SamplePipeline extends OpenCvPipeline {

@Override

public void init(Mat input) {

// Executed before the first call to processFrame

}

@Override

public Mat processFrame(Mat input) {

// Executed every time a new frame is dispatched

return input; // Return the image that will be displayed in the viewport

// (In this case the input mat directly)

}

@Override

public void onViewportTapped() {

// Executed when the image display is clicked by the mouse or tapped

// This method is executed from the UI thread, so be careful to not

// perform any sort heavy processing here! Your app might hang otherwise

}

}

You can learn more about pipelines in their dedicated section.

Adding pipelines to EOCV-Sim

There are two ways to add your own pipelines:

- Workspaces, which are the fastest and most flexible option — pipelines are built on-the-fly and changes are applied immediately.

- Building from source, which allows the use of other JVM languages such as Kotlin, but is slower since you have to rebuild and reopen the sim every time you make changes to your pipelines.

Workspaces are the recommended method for development if you use Java. You can use any IDE or text editor for them. We officially support Android Studio (partially), VS Code, and IntelliJ IDEA.

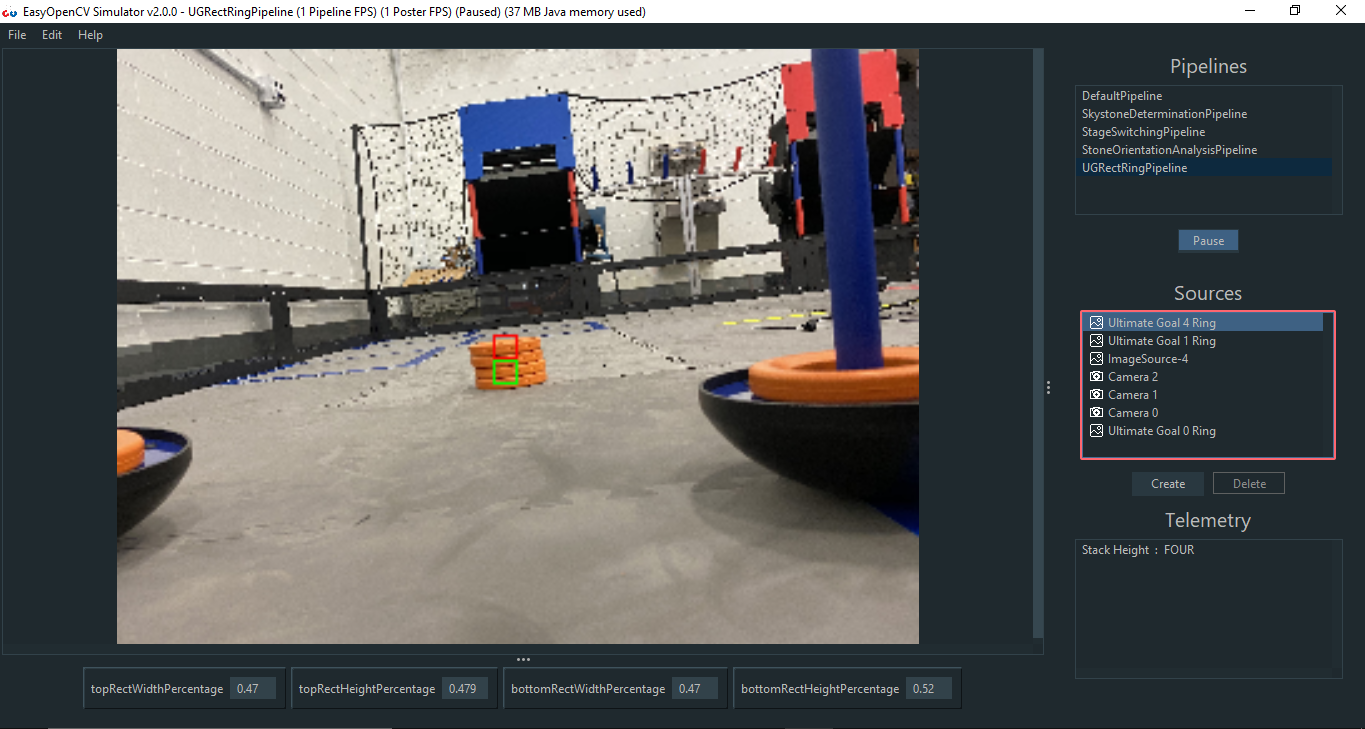

Executing a pipeline

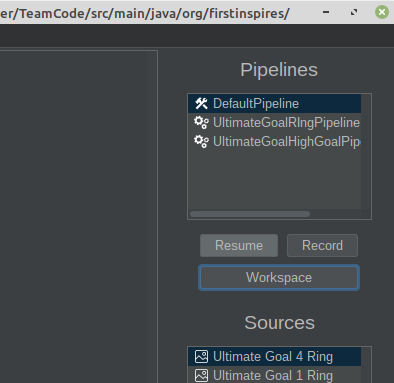

Once you’ve added a pipeline using either of the methods above, running it is straightforward. Your pipeline should appear in the “Pipelines” list on the right side of the UI:

(2).png)

Click on a pipeline to select it, and the lifecycle described above will start running in your code.

The gears icon on SamplePipeline indicates it was added via the Workspaces method. The hammer and wrench icon on DefaultPipeline indicates it was added via Build from Source.

Introduction to VisionPortal

New for the 2023-2024 season is the VisionPortal interface. A new technology that implements OpenCV vision right into the FTC SDK, making computer vision more accessible and easier to code for FIRST Tech Challenge than ever before. Creating a camera stream with VisionPortal is as easy as writting just a few, concise lines of code;

VisionPortal myVisionPortal;

// Create a VisionPortal, with the specified camera, and assign it to a variable.

myVisionPortal = VisionPortal.easyCreateWithDefaults(hardwareMap.get(WebcamName.class, "Webcam 1"), ...);

Going into more technical details, VisionPortal is a thin API built on top of EasyOpenCV. So, not only is it easier to use, but it also takes advantage of the proven reliability of EasyOpenCV, used by hundreds (or even thousands!) of teams ever since 2019.

We won’t go further in depth on the functionality of VisionPortal for the purposes of this documentation, but it’s highly advised check out ftc-docs for more information about the usage of this API.

VisionProcessor

The VisionProcessor interface was introduced along VisionPortal to mimic the usage of OpenCvPipeline to this new API. If we take a look into the interface, we can notice it is pretty similar in concept:

import android.graphics.Canvas;

import org.firstinspires.ftc.robotcore.internal.camera.calibration.CameraCalibration;

import org.firstinspires.ftc.vision.VisionProcessor;

import org.opencv.core.Mat;

public class SampleProcessor implements VisionProcessor {

@Override

public void init(int width, int height, CameraCalibration calibration) {

// Code executed on the first frame dispatched into this VisionProcessor

}

@Override

public Object processFrame(Mat frame, long captureTimeNanos) {

// Actual computer vision magic will happen here

}

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext) {

// Cool feature: This method is used for drawing annotations onto

// the displayed image, e.g outlining and indicating which objects

// are being detected on the screen, using a GPU and high quality

// graphics Canvas which allow for crisp quality shapes.

}

}

We can attach this processor into a VisionPortal to start dispatching camera frames into our custom computer vision algorithms;

VisionPortal myVisionPortal;

SampleProcessor sampleProcessor = new SampleProcessor();

// Create a VisionPortal, with the specified camera and the

// SampleProcessor we created earlier, and assign it to a variable.

myVisionPortal = VisionPortal.easyCreateWithDefaults(hardwareMap.get(WebcamName.class, "Webcam 1"), sampleProcessor);

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74

Creating and Running a VisionProcessor

We’ll start off with a familiar example; the most simple processing that can be done in OpenCV is changing an image’s color space to another one. The following VisionProcessor simply takes the input frame and changes its color space to grayscale:

import android.graphics.Canvas;

import org.firstinspires.ftc.robotcore.internal.camera.calibration.CameraCalibration;

import org.firstinspires.ftc.vision.VisionProcessor;

import org.opencv.core.Mat;

import org.opencv.imgproc.Imgproc;

public class GrayProcessor implements VisionProcessor {

@Override

public void init(int width, int height, CameraCalibration calibration) {

// Not useful in this case, but we do need to implement it either way

}

@Override

public Object processFrame(Mat frame, long captureTimeNanos) {

Imgproc.cvtColor(frame, frame, Imgproc.COLOR_RGB2GRAY);

return null; // No context object

}

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext) {

// Not useful either

}

}

The key difference from OpenCvPipeline is in the way we display the gray image - instead of returning a Mat, any change made to the frame object will be displayed on the screen accordingly. Although it is advisable to use onDrawFrame instead, this will serve well for our example purposes.

Note that we return null from processFrame, which means that the userContext in onDrawFramewill have that corresponding value. Anything returned from processFramewill be respectively passed into onDrawFrame as userContext.

Adding processors to EOCV-Sim

There are two ways for adding your own processors:

- Workspaces, which are the fastest and most flexible method of using the sim, since the code is built on-the-fly and changes are applied immediately.

- Building from source, which allows the use of other JVM languages such as Kotlin, but it is slower since you have to recompile and wait for the sim to open every time you make changes in your code.

Workspaces are the recommended method for development if you use Java. You can use any IDE or text editor for them.

Executing a processor

Once you have added a processor using any of the methods mentioned before, executing it is very simple. Your processor should appear in the “Pipelines” list once it’s part of a workspace or added to EOCV-Sim’s source code:

(1).png)

You can simply select the processor in this list, and it will begin execution right away.

(1).png)

OpModes in EOCV-Sim

To enable usage of the VisionPortal API within EOCV-Sim, newer versions of the simulator enable the usage of so-called “OpModes”; FIRST Tech Challenge teams should be already familiar with this concept, where they work as “executable units” that allow users to write and run their custom robot code in a logical and simple manner, splitting robot operation into different “programs” that can be selected and switched to perfom different tasks depending on what is needed through the different stages of a robot match.

Due to the way VisionPortal works specifically, it is ideal to call this API within said OpModes, where setup code tells the API which cameras to use, the resolution of the camera stream, whether we want a live preview or not, running multiple VisionProcessors at once, or even perform development and testing of AprilTag localization math within these executable units.

Lifecycle

OpModes have a very specific and simple flow of execution:

- The

init()method which is executed when you press init after selecting the OpMode - The

loop()method which is executed repeatedly afterinit()has passed you press start - The OpMode should be able to stop anytime when requested, pressing the stop button that is available right after starting.

.png)

OpMode Structure

Just like OpenCvPipeline, OpMode is a class that you can extend and inherit basic methods from:

import com.qualcomm.robotcore.eventloop.opmode.OpMode;

import com.qualcomm.robotcore.eventloop.opmode.TeleOp;

/*

* This contains an example of an iterative (Non-Linear) "OpMode".

* An OpMode is a 'program' that runs in either the autonomous or the teleop period of an FTC match.

* The names of OpModes appear on the menu of the FTC Driver Station.

* When a selection is made from the menu, the corresponding OpMode

* class is instantiated on the Robot Controller and executed.

*/

@TeleOp(name="Example OpMode")

public class ExampleOpMode extends OpMode {

/*

* Code to run ONCE when the driver hits INIT

*/

@Override

public void init() {

telemetry.addData("Status", "Initialized");

}

/*

* Code to run REPEATEDLY after the driver hits INIT, but before they hit PLAY

*/

@Override

public void init_loop() {

}

/*

* Code to run ONCE when the driver hits PLAY

*/

@Override

public void start() {

}

/*

* Code to run REPEATEDLY after the driver hits PLAY but before they hit STOP

*/

@Override

public void loop() {

}

/*

* Code to run ONCE after the driver hits STOP

*/

@Override

public void stop() {

}

}

@Autonomous vs @TeleOp

You might have noticed this particular declaration in the example code earlier, which are known as “annotations” within Java. In this specific case, this annotation helps the program find your custom-created OpModes. The key difference between @Autonomous and @TeleOp simply consists of where your program will be classified within the user interface of the station controls;

(1).png)

Both annotations take a nameparameter which aid in displaying a more user-friendly name for your OpModes when selecting them;

@TeleOp(name = "Concept: AprilTag")

(1).png)

LinearOpMode

LinearOpMode has a different structure than OpMode, but it is basically the same idea;

@Autonomous(name="Example OpMode")

public class ExampleLinearOpMode extends LinearOpMode {

@Override

public void runOpMode() {

telemetry.addData("Status", "Initialized");

telemetry.update();

// Wait for the game to start (driver presses PLAY)

waitForStart();

// run until the end of the match (driver presses STOP)

while (opModeIsActive()) {

telemetry.addData("Status", "Running");

telemetry.update();

}

}

}

There is an overridden method called runOpMode. Every op mode of typeLinearOpMode must implement this method, as it gets called when a user selects and initializes your OpMode within the UI. Note that all linear op modes should have a waitForStart() statement to ensure that the robot will not begin executing the op mode until the driver pushes the start button.

After a start command has been received, the op mode enters a while loop and keeps iterating in this loop until the op mode is no longer active (i.e., until the user pushes the stop button on the Driver Station).

Using VisionPortal within OpModes

We’ll start with a pretty basic example that uses VisionPortal to run the the bundled AprilTagProcessor and explain line by line:

import com.qualcomm.robotcore.eventloop.opmode.Disabled;

import com.qualcomm.robotcore.eventloop.opmode.LinearOpMode;

import com.qualcomm.robotcore.eventloop.opmode.TeleOp;

import org.firstinspires.ftc.robotcore.external.hardware.camera.WebcamName;

import org.firstinspires.ftc.vision.VisionPortal;

import org.firstinspires.ftc.vision.apriltag.AprilTagDetection;

import org.firstinspires.ftc.vision.apriltag.AprilTagProcessor;

@Autonomous(name = "Example VisionPortal OpMode")

public class ExampleVisionPortalOpMode extends LinearOpMode {

/**

* The variable to store our instance of the AprilTag processor.

*/

private AprilTagProcessor aprilTag;

/**

* The variable to store our instance of the vision portal.

*/

private VisionPortal visionPortal;

@Override

public void runOpMode() {

visionPortal = VisionPortal.easyCreateWithDefaults(

hardwareMap.get(WebcamName.class, "Webcam 1"), aprilTag);

telemetry.addData(">", "Touch Play to start OpMode");

telemetry.update();

// Wait for the DS start button to be touched.``

waitForStart();

if (opModeIsActive()) {

// ...

}

// Save more CPU resources when camera is no longer needed.

visionPortal.close();

}

}

@Autonomous(name = "Example VisionPortal OpMode")

public class ExampleVisionPortalOpMode extends LinearOpMode {

Declares our LinearOpMode and annotates it as an autonomous program. LinearOpMode is often more useful when coding autonomous routines due to its inherent structure.

/**

* The variable to store our instance of the AprilTag processor.

*/

private AprilTagProcessor aprilTag;

/**

* The variable to store our instance of the vision portal.

*/

private VisionPortal visionPortal;

Some convenience variables that will let us store our VisionProcessor and VisionPortal instances that we will be using later on.

@Override

public void runOpMode() {

Inherits the method from LinearOpMode that will be executed when the OpMode is initialized. Any code put in here will be executed as a result.

// Create the AprilTag processor the easy way.

aprilTag = AprilTagProcessor.easyCreateWithDefaults();

// Create the vision portal the easy way.

visionPortal = VisionPortal.easyCreateWithDefaults(

hardwareMap.get(WebcamName.class, "Webcam 1"), aprilTag);

This is the key part of our image processing initialization; we’ll create our AprilTagProcessor and VisionPortal instances by using easyCreateWithDefaults()methods, which allows us to effortlessly initialize things by only passing a few parameters.

We’ll make a special emphasis on this part;

hardwareMap.get(WebcamName.class, "Webcam 1")

This line basically defines what will be the source of our images that are passed onto the attached VisionProcessors, usually a webcam for that matter. “Webcam 1” indicates the robot configuration name of the image capture device we wish to use, as it is commonly the default name automatically assigned by the FTC SDK.

In the case of EOCV-Sim, fortunately we have quite some other options here in order to provide more flexibility when it comes to testing your vision code;

Using other input sources in your OpModes

| Type | WebcamName | WebcamName | |

|---|---|---|---|

| USB Camera |

|

| |

| Image |

| ||

| Video |

|

WebcamName code examples

USB Cameras

hardwareMap.get(WebcamName.class, "Webcam 1");

hardwareMap.get(WebcamName.class, "0"); // Same as "Webcam 1"

hardwareMap.get(WebcamName.class, "1"); // Other webcam

hardwareMap.get(WebcamName.class, "2"); // Other webcam

Images

hardwareMap.get(WebcamName.class, "C:\Users\pepito\Pictures\OnePixel.png");

Videos

hardwareMap.get(WebcamName.class, "/Users/PepitoRico/Downloads/10PixelsVideo.avi");

Drawing annotations using Android Canvas

onDrawFrame() basics

Java classes implementing the VisionProcessor interface inherit 3 methods: init, processFrame, and one more that hasn’t been covered yet:

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext)

onDrawFrame() lets you draw shapes, text, and colors on top of your image processing results — useful for highlighting detected elements, annotating text, drawing 3D overlays on AprilTags, and so on. Because this drawing step runs on a separate thread (in parallel with your vision processing) and is often GPU-accelerated, it lives in its own method rather than inside processFrame.

Android Canvas object

In Android, the Canvas object acts as a virtual drawing surface. You can use it to draw shapes, images, and text on screen. EOCV-Sim mocks this interface so it works on desktop the same way it would inside the FTC SDK on an Android device.

A few things worth knowing about the Canvas:

- Drawing methods:

drawRect(),drawCircle(),drawLine(),drawText(), and many more. - Coordinate system: Origin

(0, 0)is at the top-left corner. Positive X goes right, positive Y goes down. - Color and style: Controlled through the

Paintobject, which you pass into drawing methods.

// Create a Paint object to set drawing attributes

Paint paint = new Paint();

paint.setColor(Color.RED); // Set the color to red

// Draw a red rectangle on the Canvas

canvas.drawRect(50, 50, 200, 200, paint);

Understanding the Paint object

Think of the Paint object as a combination of a paint bucket (defining the color) and a brush (defining the size and style). You configure it once and pass it to Canvas drawing methods.

The most commonly used attributes:

- Color:

setColor()— e.g.paint.setColor(Color.RED) - Style:

setStyle()—FILL,STROKE, orFILL_AND_STROKE - Stroke width:

setStrokeWidth()— controls line thickness - Text size:

setTextSize()— for text annotations and labels - Typeface:

setTypeface()— for custom font styles

Paint paint = new Paint();

paint.setColor(Color.BLUE);

paint.setStyle(Paint.Style.FILL);

Most Canvas methods take a Paint as their last argument, so you can reuse the same object across multiple draw calls to keep consistent styling.

// Draw a blue filled circle on the Canvas

canvas.drawCircle(150, 150, 100, paint);

Handling context objects in a VisionProcessor

The userContext parameter in onDrawFrame is the key to coordinating between your processing and drawing steps. Whatever object you return from processFrame is passed in as userContext in the next onDrawFrame call — this is how you transfer detection results (like a list of rectangles) from one step to the other without shared mutable state.

public class SimpleVisionProcessor implements VisionProcessor {

@Override

public void init(int width, int height, CameraCalibration calibration) {

// Initialization code, if needed

}

@Override

public Object processFrame(Mat frame, long captureTimeNanos) {

// Process the frame, detect shapes, and store them in the context object

List<Rect> detectedRects = detectRects(frame);

// Return the context object as userContext

return detectedShapes;

}

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext) {

// Render detected shapes using the userContext (which is the context object)

drawRects(canvas, (List<Rect>) userContext);

}

// TODO: Implement detectRects and drawRects...

}

Note the type cast in onDrawFrame — since userContext is typed as Object, you need to cast it back to the expected type before using it.

OpenCV to Android Canvas position transformations

The Canvas and the OpenCV image may differ in size and aspect ratio, so you need to scale coordinates before drawing. The scaleBmpPxToCanvasPx parameter provided to onDrawFrame handles this. Here’s a utility method that converts an OpenCV Rect into Android Canvas coordinates:

private android.graphics.Rect makeGraphicsRect(Rect rect, float scaleBmpPxToCanvasPx) {

int left = Math.round(rect.x * scaleBmpPxToCanvasPx);

int top = Math.round(rect.y * scaleBmpPxToCanvasPx);

int right = left + Math.round(rect.width * scaleBmpPxToCanvasPx);

int bottom = top + Math.round(rect.height * scaleBmpPxToCanvasPx);

return new android.graphics.Rect(left, top, right, bottom);

}

And here’s how you’d use it in onDrawFrame:

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext) {

Rect rect = ...; // OpenCV rectangle

Paint rectPaint = new Paint();

rectPaint.setColor(Color.RED);

rectPaint.setStyle(Paint.Style.STROKE);

rectPaint.setStrokeWidth(scaleCanvasDensity * 4);

canvas.drawRect(makeGraphicsRect(rect, scaleBmpPxToCanvasPx), rectPaint);

}

You can find the full example code here and test it out on EOCV-Sim or even your own robot!

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

Workspaces

A workspace is a folder containing .java source files and resource files that EOCV-Sim compiles on-the-fly. This means you don’t need to run Gradle builds — changes are picked up and applied within seconds.

Selecting a workspace

Workspaces aren’t tied to any specific IDE or text editor — you just need to point EOCV-Sim at a folder containing .java files. A eocvsim_workspace.json file in the folder controls the build process (covered in the next section).

By default, the sim creates and uses a workspace at ~/.eocvsim/default_workspace, which includes a sample GrayscalePipeline.java. To switch to a different folder:

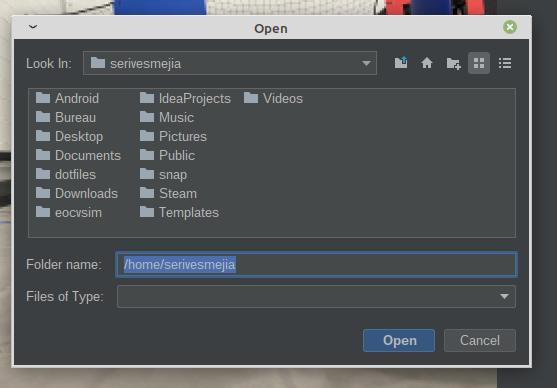

- Go to the “Pipelines” section and click Workspace → Select workspace

- Select a folder in the file explorer that opens

- The sim will set the selected folder as the active workspace, generate a

eocvsim_workspace.jsonif one doesn’t exist, and compile the.javafiles inside.

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

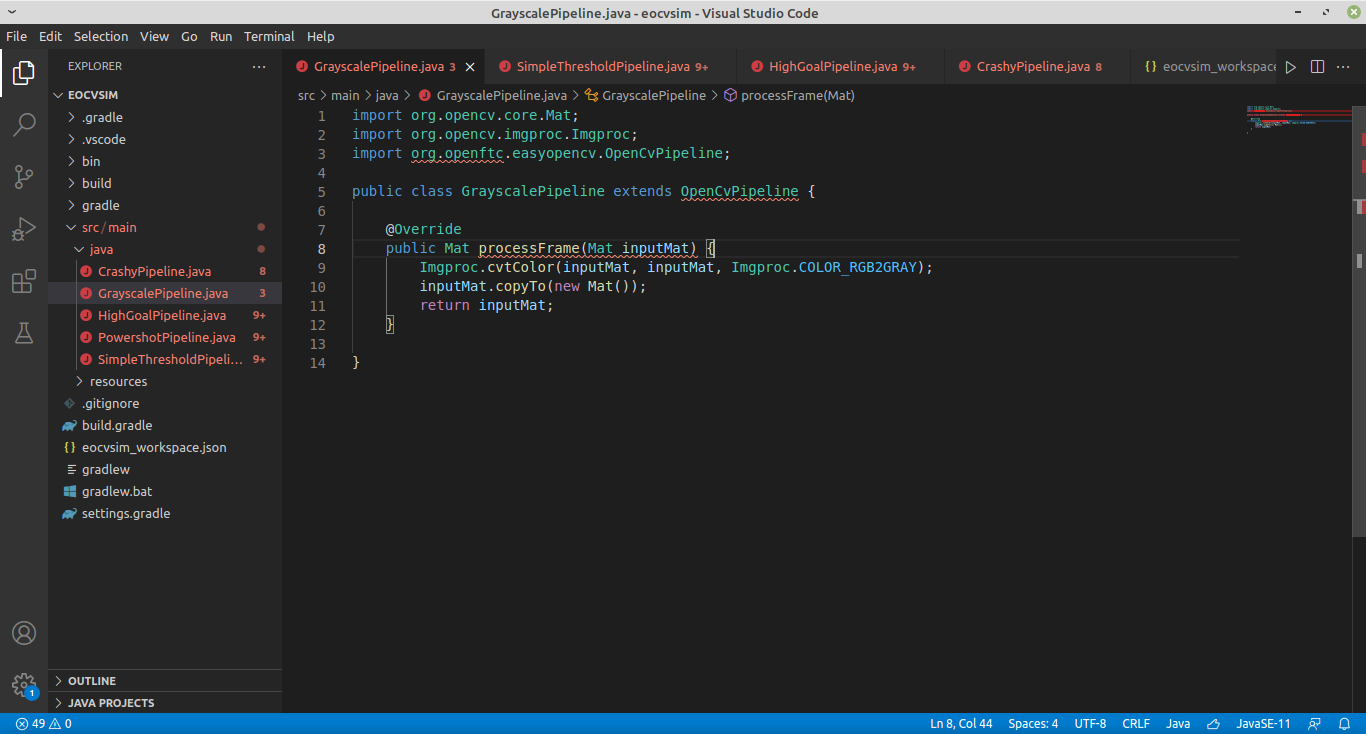

VS Code and IntelliJ IDEA

Both IntelliJ IDEA and VS Code are the IDE and Text Editor recommended to use for EOCV-Sim. While IntelliJ is a fully featured IDE specifically designed for Java, with consistent and great autocompletion, refactoring features, etc, VS Code is more lightweight and faster in computers with limited resources.

This guide will explain how to use any of these two, you can choose whichever suits you the best.

VS Code

Make sure you installed a JDK as explained in the Dowloading EOCV-Sim section.

- Download VS Code in here if you haven’t already.

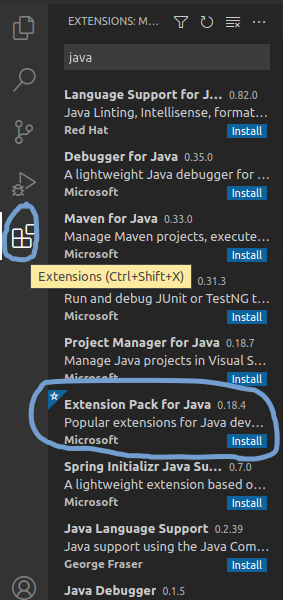

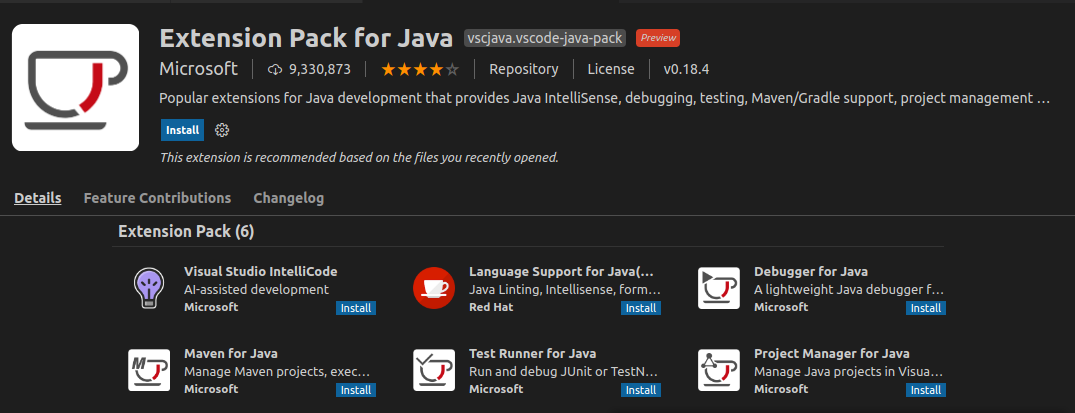

- Open VS Code and install the Extension Pack for Java, going to the extensions section, search for “java” in the search box at the top and find the extension that looks like the following screenshot.

- Click on the blue “Install” button and restart VS Code.

- Do the steps specified in the Creating a Gradle workspace section

- Once you have done the steps in that section, go back to VS Code. If it wasn’t opened automatically by EOCV-Sim, open it manually and select the folder you created in EOCV-Sim.

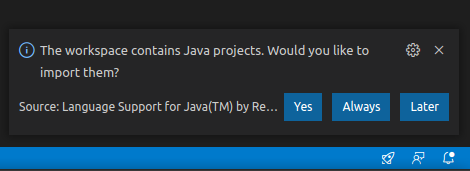

- If the language support plugin asks to import the project on the bottom right, click on yes.

- Wait for the import process to finish; see the tiny loading icon in the bottom right.

- Pop up the

src/main/javafolder. This is where you will put your pipelines. - To create a new pipeline, right-click on the

javafolder and then choose “New File”. Give the file a name and a.javaextension (append it at the end of the name, for exampleGrayscalePipeline.java) - Copy and paste this template to have a base to create your pipeline. Replace the name of the class with the name you gave the file where it’s indicated

import org.opencv.core.Mat;

import org.opencv.imgproc.Imgproc;

import org.openftc.easyopencv.OpenCvPipeline;

public class <Name Here> extends OpenCvPipeline {

@Override

public Mat processFrame(Mat inputMat) {

// Your code here

return inputMat;

}

}

If you have EOCV-Sim opened, every time you save the file in the editor (you can use Ctrl + S) a new build will be executed. If your pipeline was compiled successfully, it will be added to the list with a “gears” icon in the list to differentiate it.

However, if the build failed, you will be presented with an output error message saying where the errors are located exactly. VS Code IntelliSense should help you with finding these issues.

Refer to the pipelines section if you want to learn more about pipelines.

IntelliJ IDEA

- Do the steps specified in the Creating a Gradle workspace section

- Open IntelliJ IDEA and import the Gradle workspace you just created:

.png)

Alternatively, if you’re not at the starter screen of IntelliJ IDEA, you can also do the following:

.png)

- Navigate through the src/main/java folders, you’ll then find the packages in which you’ll be able to start adding your own pipelines

.png)

- Create a new Java class anywhere within the src/main/java folder. To create a pipeline, you can start with this template

import org.opencv.core.Mat;

import org.opencv.imgproc.Imgproc;

import org.openftc.easyopencv.OpenCvPipeline;

public class <Name Here> extends OpenCvPipeline {

@Override

public Mat processFrame(Mat inputMat) {

// Your code here

return inputMat;

}

}

If you have EOCV-Sim opened, every time you make a change in IntellIj a new build will be executed. If your pipeline was compiled successfully, it will be added to the list with a “gears” icon in the list to differentiate it.

Creating a Gradle workspace

- Open EOCV-Sim (follow this page if needed)

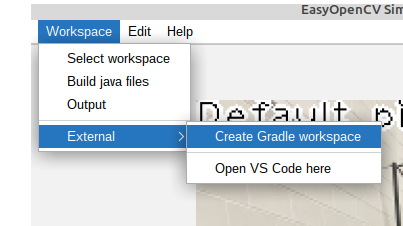

- In the top bar menu, go to Workspace -> External -> Create Gradle Workspace

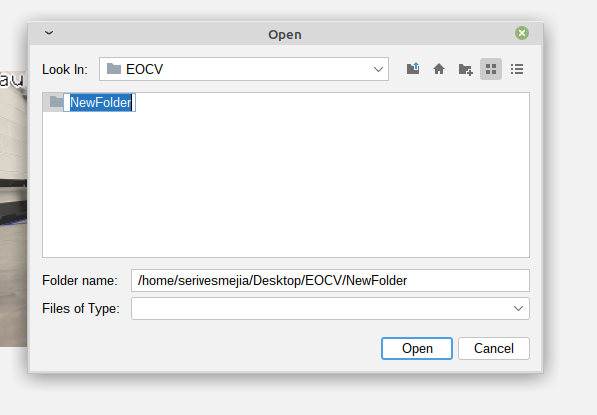

- In the file explorer, create a new empty folder or select one that already exists but has no files. You can’t use a folder that already has files in it. Click on the folder icon with a “+” in the top, and give the new folder a name.

- Select the newly created folder and click on “open”.

It will pop up a dialog asking if you want to open VS Code.

- If you were following the VS Code guide, click on “Yes” once it asks if you want to open it, and go back to step 5 of the VS Code section.

- If you were following the IntelliJ IDEA guide, click on “No” and go back to step 2 of the IntelliJ IDEA section.

This article was last modified...

On 2026-05-07 23:10:31 -06:00

By Sebastian Erives

See it here:08db7ff339abde0b109efab8cb1225fc35b60156

Android Studio

If you want to use EOCV-Sim with OpenCvPipelines that are already in Android Studio, that’s easily possible using the workspaces feature.

To achieve this, you need to isolate your pipeline’s source files into their own package. Since EOCV-Sim only implements a very small part of the FTC SDK, if you try to compile a class that references stuff like DcMotor, it will fail since those classes don’t exist in EOCV-Sim.

The only classes from the FTC SDK and EasyOpenCV that have been implemented are…

| Package | Classes |

|---|---|

| org.firstinspires.ftc.robotcore.external |

Telemetry (partially) Func |

| org.firstinspires.ftc.robotcore.external.function | Consumer |

| org.firstinspires.ftc.vision | * (everything) |

| com.qualcomm.robotcore.eventloop.opmode |

OpMode |

| com.qualcomm.robotcore.util |

ElapsedTime MovingStatistics Range Statistics |

| org.openftc.easyopencv |

OpenCvPipeline OpenCvTracker OpenCvTrackerApiPipeline TimestampedOpenCvPipeline |

| org.opencv | * (everything) |

This also means that, outside of OpModes, you do not need to use theOpenCvCamerarelated stuff in EOCV-Sim, inputs are simulated using Input Sources.

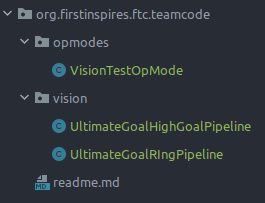

For example, you can have the following package structure in your Android Studio project to isolate OpenCvPipelines and load them into EOCV-Sim:

The VisionTestOpModeclass can freely use any of the FTC SDK or EasyOpenCV classes, while the classes under the visionpackage should only use the ones specified in the table before, including the whole OpenCV library of course.

Now, you will select the vision package as a workspace in EOCV-Sim. To select a workspace you can go to Workspace -> Select workspace, like in the gif shown below (both options showcased do the same thing):

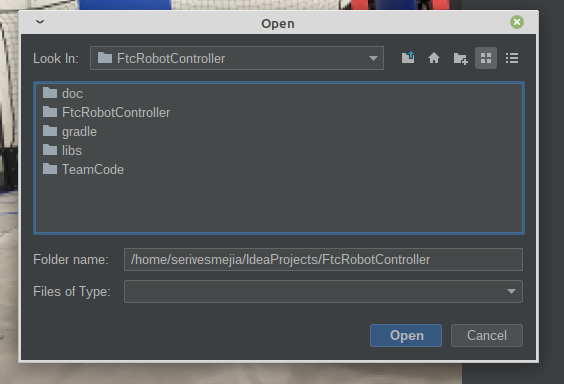

To find the vision folder in the project, first locate the root folder of your FTC SDK project in the file selector, something that looks like this:

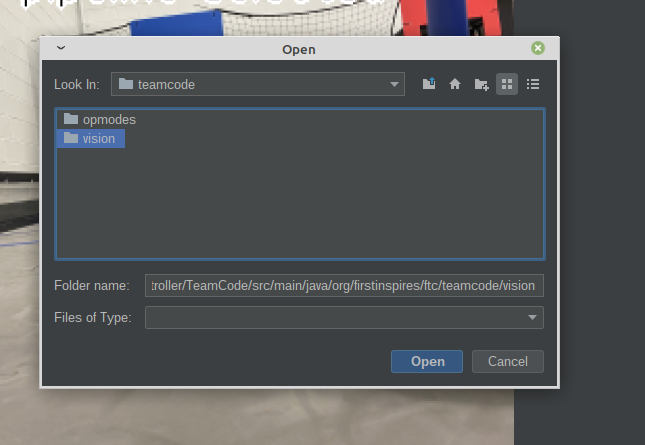

Then, navigate through the foldersTeamCode/src/main/java/org/firstinspires/ftc/teamcode and you will find the following:

Select the vision folder and click on “Open”. The pipelines inside will be compiled in a few instants, and you will have them on the pipeline selector once it finishes successfully:

And now you are done! You will now be able to modify your pipelines from Android Studio and see the changes live. Refer to the features section to learn more about the additional features of EOCV-Sim

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74

Input Sources

To provide more flexibility, EOCV-Sim allows feeding your pipeline with images coming from different sources. You can feed a single static image or a moving video stored on your computer’s disk, or stream frames from a webcam connected to your computer. These will be explained next.

-

Image Source:

- These will feed your pipeline with a static image loaded in your computer’s hard drive.

- To save resources, your pipeline will just run once when you select an image source, but you can optionally resume the pipeline execution by clicking the “Pause” button under the pipeline selector.

-

Camera Source:

- These will feed your pipeline with a constantly changing video stream from a specified camera plugged in your computer.

- Unlike the image sources, these will not pause the execution of you pipeline by default, but you can click the “Pause” button to pause it at any time.

-

Video Source:

- These will feed your pipeline with a constantly changing video stream from a file in your hard drive, pause rules are the same as camera sources.

- Recommended video format is *.avi, although it depends on your operating system’s support.

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74

Variable Tuner

Basics

From EOCV-Sim v2.0.0 and going forward, there’s a variable tuner implemented into the simulator, inspired by FTC Dashboard, it allows to edit public, non-final variables from your pipeline in real time seamlessly through Java reflection.

(1).png)

(1).png)

The “blur” variable simply consists of a public, non-final field declared in the DefaultPipeline, which is automatically detected and displayed by the simulator:

(1).png)

Supported Types

The tuner supports a handful of Java types, such as most primitives (int, float, double, boolean…) and some other types from OpenCV. The full list of types currently supported by the tuner on the latest version is:

Java:

- int (or Integer)

- float (or Float)

- double (or Double)

- long (or Long)

- boolean (or Boolean)

- String

- Enums

OpenCV:

- Scalar

- Rect

- Point

Sample Usage

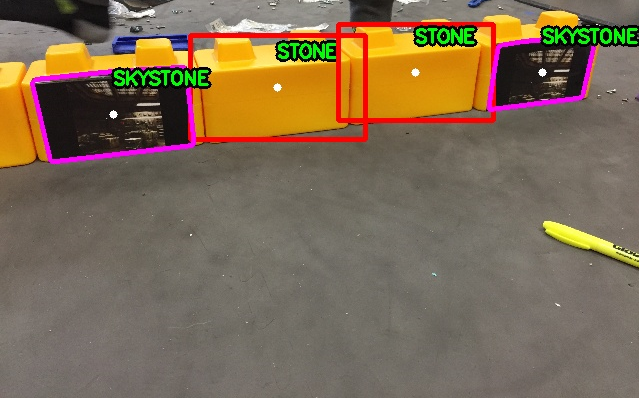

Let’s say we need to tune a threshold for finding the ring stack in the 2020-2021 “Ultimate Goal” game. For this, we will use the YCrCb color space since it’s one of the most used ones in FTC, and it behaves better under different lightning conditions. (see this article for more extended explanation and comparing of different color spaces).

We can write a simple pipeline for achieving this, taking advantage of the variable tuner. Here’s an example code with detailed comments:

package org.firstinspires.ftc.teamcode;

import org.opencv.core.Core;

import org.opencv.core.Mat;

import org.opencv.core.Scalar;

import org.opencv.imgproc.Imgproc;

import org.openftc.easyopencv.OpenCvPipeline;

public class SimpleThresholdPipeline extends OpenCvPipeline {

/*

* These are our variables that will be

* modifiable from the variable tuner.

*

* Scalars in OpenCV are generally used to

* represent color. So our values in the

* lower and upper Scalars here represent

* the Y, Cr and Cb values respectively.

*

* YCbCr, like most color spaces, range

* from 0-255, so we default to those

* min and max values here for now, meaning

* that all pixels will be shown.

*/

public Scalar lower = new Scalar(0, 0, 0);

public Scalar upper = new Scalar(255, 255, 255);

/*

* A good practice when typing EOCV pipelines is

* declaring the Mats you will use here at the top

* of your pipeline, to reuse the same buffers every

* time. This removes the need to call mat.release()

* with every Mat you create on the processFrame method,

* and therefore, reducing the possibility of getting a

* memory leak and causing the app to crash due to an

* "Out of Memory" error.

*/

private Mat ycrcbMat = new Mat();

private Mat binaryMat = new Mat();

private Mat maskedInputMat = new Mat();

@Override

public Mat processFrame(Mat input) {

/*

* Converts our input mat from RGB to YCrCb.

* EOCV ALWAYS returns RGB mats, so you'd

* always convert from RGB to the color

* space you want to use.

*

* Takes our "input" mat as an input, and outputs

* to a separate Mat buffer "ycrcbMat"

*/

Imgproc.cvtColor(input, ycrcbMat, Imgproc.COLOR_RGB2YCrCb);

/*

* This is where our thresholding actually happens.

* Takes our "ycrcbMat" as input and outputs a "binary"

* Mat to "binaryMat" of the same size as our input.

* "Discards" all the pixels outside the bounds specified

* by the scalars above (and modifiable with EOCV-Sim's

* live variable tuner.)

*

* Binary meaning that we have either a 0 or 255 value

* for every pixel.

*

* 0 represents our pixels that were outside the bounds

* 255 represents our pixels that are inside the bounds

*/

Core.inRange(ycrcbMat, lower, upper, binaryMat);

/*

* Release the reusable Mat so that old data doesn't

* affect the next step in the current processing

*/

maskedInputMat.release();

/*

* Now, with our binary Mat, we perform a "bitwise and"

* to our input image, meaning that we will perform a mask

* which will include the pixels from our input Mat which

* are "255" in our binary Mat (meaning that they're inside

* the range) and will discard any other pixel outside the

* range (RGB 0, 0, 0. All discarded pixels will be black)

*/

Core.bitwise_and(input, input, maskedInputMat, binaryMat);

/*

* The Mat returned from this method is the

* one displayed on the viewport.

*

* To visualize our threshold, we'll return

* the "masked input mat" which shows the

* pixel from the input Mat that were inside

* the threshold range.

*/

return maskedInputMat;

}

}

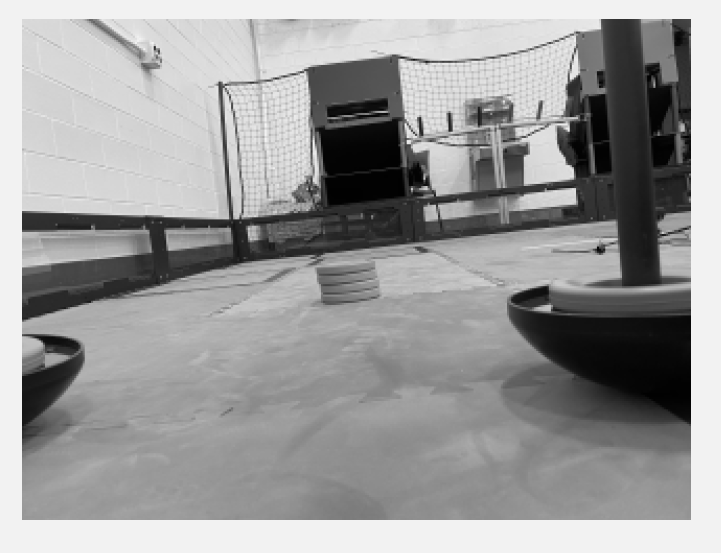

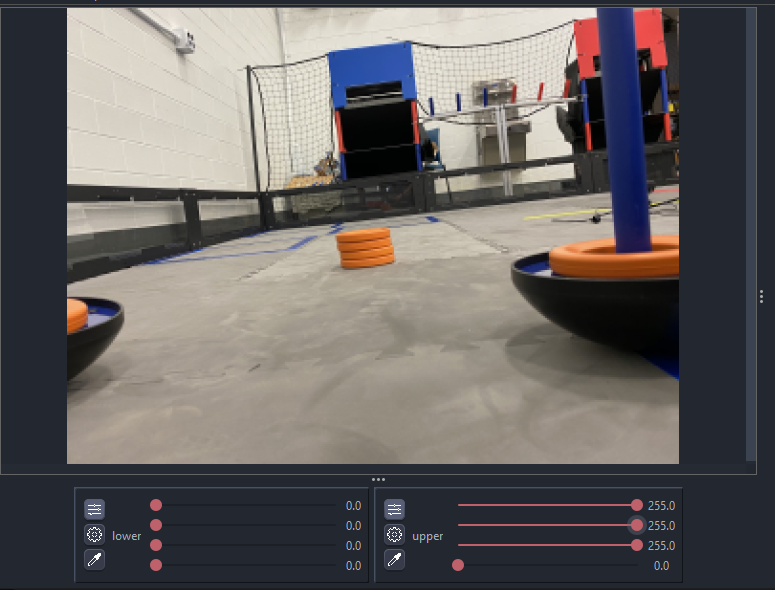

And so, when initially selecting this pipeline in the simulator, its initial state should look something like this:

All pixels from the input Mat are entirely visible; this is because we specified a range of 0 lower and 255 upper (0-255) for all three channels (see the sliders values). Since those values are the minimum (0%) and maximum (100%) for YCrCb respectively, all pixels are able to go through our “threshold”. The last slider can be ignored since we don’t have a 4th color channel

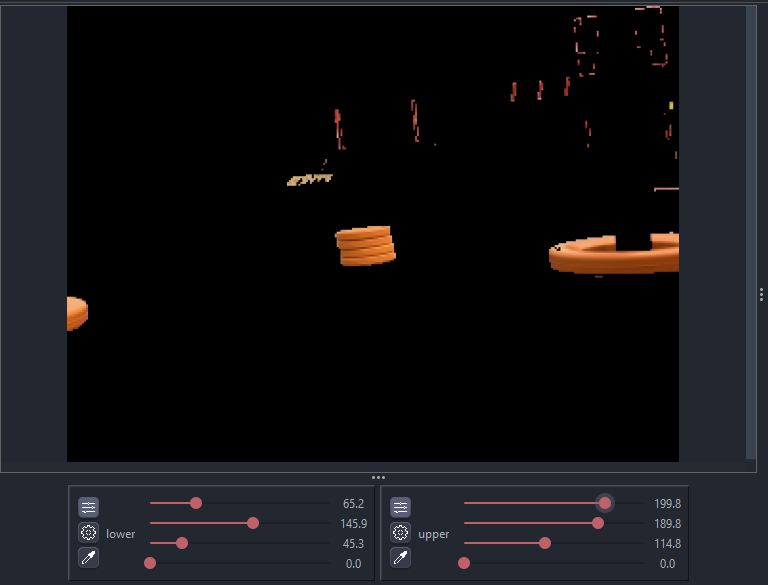

After a bit of playing around with the sliders, it’s possible to come up with some decent values which successfully filter out the orange ring stack out of everything else:

A problem with the YCrCb color space, especially this year, is that the difference between red and orange is very subtle. So we need to play with the values for a good while until we find some that filters out the red from the goals (in the image you can see there’s still red leftovers at the top right) but displays the ring stack. Or do some other technique alongside thresholding such as FTCLib’s contour ring pipeline with the “horizon” mechanism.

To keep this explanation simple, you can find the final pipeline here with some additional features, in the TeamCode module, since serves as a good sample alongside other sample classes from EOCV itself.

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74

Telemetry

It’s sometimes useful to log data from your vision code to know the result in real time. To do this, we partially implement the basic Telemetry interface that is present in the FTC SDK (e.g methods like Telemetry#talkare not implemented) to follow the main idea of EOCV-Sim of “easily copy-pasting into an FTC SDK project”.

Telemetry in VisionProcessor

To use telemetry in a proccessor, you need to have a constructor which takes a Telemetry parameter, and save it into an instance variable within your code. This is demostrated in the following snippet:

import android.graphics.Canvas;

import org.firstinspires.ftc.robotcore.internal.camera.calibration.CameraCalibration;

import org.firstinspires.ftc.vision.VisionProcessor;

import org.opencv.core.Mat;

import org.opencv.imgproc.Imgproc;

import org.firstinspires.ftc.robotcore.external.Telemetry;

public class TelemetryProcessor implements VisionProcessor {

Telemetry telemetry;

public TelemetryProcessor(Telemetry telemetry) {

this.telemetry = telemetry;

}

@Override

public void init(int width, int height, CameraCalibration calibration) {

// Not useful in this case, but we do need to implement it either way

}

@Override

public Object processFrame(Mat input) {

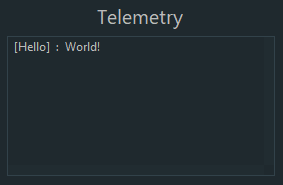

telemetry.addData("[Hello]", "World!");

telemetry.update();

return null; // No need for a context object

}

@Override

public void onDrawFrame(Canvas canvas, int onscreenWidth, int onscreenHeight, float scaleBmpPxToCanvasPx, float scaleCanvasDensity, Object userContext) {

// Not useful either

}

}

The basic idea of telemetry is to send data using the Telemetry#addData or Telemetry#addLine methods. Once you finish adding data, you call Telemetry#update in the end, to display the data and clear the past state of the telemetry so the messages that were sent in a previous call to update will not be displayed.

Telemetry in OpenCvPipeline

We’ll replicate the example from earlier as an OpenCvPipeline. The core concept remains the same, but the code structure changes a little from using a different interface;

import org.opencv.core.Mat;

import org.openftc.easyopencv.OpenCvPipeline;

import org.firstinspires.ftc.robotcore.external.Telemetry;

public class TelemetryPipeline extends OpenCvPipeline {

Telemetry telemetry;

public TelemetryPipeline(Telemetry telemetry) {

this.telemetry = telemetry;

}

@Override

public Mat processFrame(Mat input) {

telemetry.addData("[Hello]", "World!");

telemetry.update();

return input; // Return the input mat

}

}

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74

Building from Source

Especially for the users that wish to use EOCV-Sim with Kotlin, which is a feature not currently supported with workspaces, you can instead opt to download the sim’s source code and build it after adding your own pipelines

- Make sure to have IntelliJ IDEA installed. Any IDE with Java Gradle support should work, but it is extremely recommended to use IntelliJ.

- Clone EOCV-Sim’s repository using Git, either from the command line;

git clone https://github.com/deltacv/EOCV-Sim

or using IntelliJ;

.png)

.png)

- After importing EOCV-Sim into IntelliJ, you can start adding your own pipelines into the “TeamCode module”. Kotlin is already configured in the project, so you don’t need to do any additional setup;

.png)

- Run EOCV-Sim using IntelliJ with the “Run Simulator” run configuration. You will need to close and run the simulator every time you make changes to your pipelines, you won’t be able to see any changes otherwise.

.png)

This article was last modified...

On 2026-03-21 01:07:14 -06:00

By Sebastian Erives

See it here:9f222a6ce64832a9c20251660c897366ca06ec74